Lessons Learned Hosting and Maintaining a Mastodon Instance

When Elon Musk bought Twitter, gutted its staff, and welcomed back its most poisonous users, it triggered an exodus. Many if not most of Twitter's users still remain, but a migration of this scale hasn't really been witnessed since Digg unleashed version 4 to a revolted userbase in 2010.

Years ago, already seeking an alternative, I joined a Mastodon instance. I didn't find it much fun in 2018, when relatively few people were there. When the migration brought a huge wave of users to the platform, it didn't take long for me to find my people. I locked down my Twitter account and stopped posting there.

Soon after that, I began to consider hosting my own Mastodon instance. I started reading a lot about other people's experiences doing so, and I realized that this would not be nearly as simple as spinning up a simple Wordpress blog. It made me hesitate - yet for some reason, I was drawn to the challenge. I'm not a sysadmin, and this was a great learning opportunity in that respect.

This post isn't about selling you on Mastodon, or teaching you how to run your own instance. Instead, I'm sharing what I've found and what I've learned. There's a lot of important information scattered across the Internet, difficult to find if you don't know where to look. My goal is to compile more of that information in one place, and help point others thinking of running instances in the right direction.

Table of Contents

- Start by Joining Someone Else's Instance

- Hosting With Docker Compose

- Relays Are Useful, but Watch Out...

- Sidekiq Issues Come at You Fast

- Handling All That Media

- Don't Sleep on the Oracle Cloud Free Tier

- Monitoring With Grafana

- Take a Look at Mastodon Forks

- Defederate Early, Often and Proactively

- Don't Forget About Liability

- Backing Up & Migrating Postgres

- Other Links I Want To Mention

A Simplified Overview of Mastodon & the Fediverse

Mastodon, unlike Twitter or Instagram, is not a single web service owned by a global corporation. It's an open-source software platform that connects to a global network of federated social media servers, popularly referred to as the "Fediverse." Mastodon is merely the most common platform among many, implementing common communication standards (notably ActivityPub). When a user on one instance makes a post, that instance pushes that post to all the instances where someone is following that user.

If you want to run your own instance of Mastodon, you'll need to know about the components that make up a functioning installation. A typical, smaller instance will be made up of these services:

- Mastodon (Web): The core web application

- Mastodon (Streaming API): Provides the streaming connections for users to get real-time updates

- Mastodon (Sidekiq): Runs & manages scheduled background tasks, like processing incoming messages

- Postgres: The primary database

- Redis: A key-value store used for caching, as well as tracking timelines and Sidekiq tasks

- Reverse Proxy/Web Server: Something like NGINX or Caddy, which handles incoming HTTP requests and forwards to web/streaming as needed

- Media Store: Could be a folder on the server, but more likely a third-party service like Amazon S3 or Backblaze B2

I set up my instance about a month and a half ago. Now that the dust has settled, these are some of my take-aways from the experience:

Start by Joining Someone Else's Instance

If you jump straight into running your own instance, then you'll find yourself pretty lonely. If you don't already know who to follow, then the best way to find people is through the local timeline, where you see posts from other users on the server. On your own instance, however, you're the only one in there.

Same with the federated timeline, which shows posts from everyone who users on the server follow. On your own instance, it'll just be a partial copy of your timeline - unless you add a relay, which we'll get to.

I highly recommend being on another server to start, especially one aimed at a particular crowd that you identify with. You can find people you'll like following, and they can find you on that local timeline too. When you're happy with who you're following & interacting with, you can migrate without losing that community.

It might also be a good idea to follow other instance administrators. [1] Some of them talk about their job, and they can provide valuable information on occasion! [2]

Hosting With Docker Compose

The official documentation provides instructions for hosting Mastodon, and there are Docker packages available. I wanted to use Docker Compose to organize & control the services, however, and the Mastodon documentation doesn't cover that scenario very well.

I found Ben Tasker's guide to running a Mastodon Instance using docker-compose very helpful! You can pretty much follow it step-by-step and it covered the vast majority of what I needed to do.

I made a tweak to those directions in the name of ease-of-maintenance, by opting to use Nginx Proxy Manager to manage the reverse proxy and SSL. If you do so, make sure that the locations /api/v1/streaming and api/v2/streaming both point to your streaming server, and not the web server.

Relays Are Useful, but Watch Out...

To add content to your instance beyond yourself and the people you follow, you can connect it to one or more relays. When someone posts on an instance connected to a relay, that post gets relayed to all other connected instances.

These relays can be a general purpose, un-moderated free-for-all, where any instance can connect - and if you do, who knows what you're getting. Other relays are moderated communities, specific to a certain demographic. They may have less content, but more of it may be meaningful to you.

You won't be able to see all of the posts out there, no matter how many relays you join. The largest instances have little need for relays, since just about everyone is followed by somebody there, so they're not connected to any.

You may find a high volume relay with open sign-ups. This can do a lot to fill your federated timeline, of course, but you're also likely to find out just how robust your setup is.

Sidekiq Issues Come at You Fast

I found one of those higher volume relays a month ago. I was excited to see posts pour into my federated timeline... but then I realized those posts were getting older and older, and so were the posts on my home timeline.

In the administration interface, you'll find a link to the Sidekiq dashboard. Sidekiq, you'll recall, is the service for processing background tasks. Long story short: if you go there, and see the Enqueued job number growing and growing, then it's being asked to process more jobs than it can handle.

This seems to be quite a common issue, especially after the first big wave of ex-Twitter-users hit the Fediverse. Nora Tindall has an excellent post explaining how to increase the throughput between Postgres and Sidekiq, so that it can handle far greater volumes. It involves tuning Postgres with PgTune, creating separate Sidekiq instances for each queue, and providing each instance with as many connections as Postgres can spare.

For my single-user server, I only ran PgTune and configured the single Sidekiq queue to use 25 connections. This has proved to be more than enough in my experience, even when a user with tens of thousands of followers shares my posts.

Speaking of Nóva, she and her fellow Hachyderm admins have experienced plenty of scaling problems as their server gained tens of thousands of users over the course of a few weeks. They've been very open about it, posting and streaming through it all. One of their other admins, Hazel Weakly, wrote up Scaling Mastodon: The Compendium, where they gathered scaling tips from all over the place into one long post. If I ever run into further issues with my instance, that will be one of the first places I turn to.

Handling All That Media

My server receives around 25,000 posts a day from its relays and (to a much lesser extent) from the people I follow. A lot of those posts have attached media, and the storage requirements add up quick.

Currently, there is 50GB of media attachments stored for my server. This is despite quite aggressive retention settings - 1 day for cached media, 7 days for cached content and 14 days for user archives.

If storage space is at a premium - and on most cheap VPS's, it very much is - you shouldn't expect to store files locally without issues. I would use cloud storage right from the beginning - not least because uploading local files to the cloud later on is a pain in the butt.

I ended up going with Backblaze B2 for storing my media. While I've heard of high latency with B2 [3], it hasn't been unbearable for me. I followed a Techbits.io guide to using Backblaze B2 with Mastodon that worked flawlessly for me.

I also followed their guide for serving B2 with Cloudflare (yes, I know, Cloudflare is problematic and I'm looking to move away from it). The combination means that all of this data costs me about a dollar per month to host & serve.

If I need to move, I plan to take a look at Jortage, a "communal cloud" designed specifically for hosting deduplicated Fediverse media. There are also S3-compatible self hosting options, like Garage and MinIO.

Don't Sleep on the Oracle Cloud Free Tier

That dollar per month is the most expensive part of my operation, [4] because the actual server is free: an ARM-powered virtual private server, offered as part of Oracle Cloud's Free Tier. 24GB memory & 100GB hard disk space, all for no charge. Marc-André Moreau has a great writeup about it on Cohost.

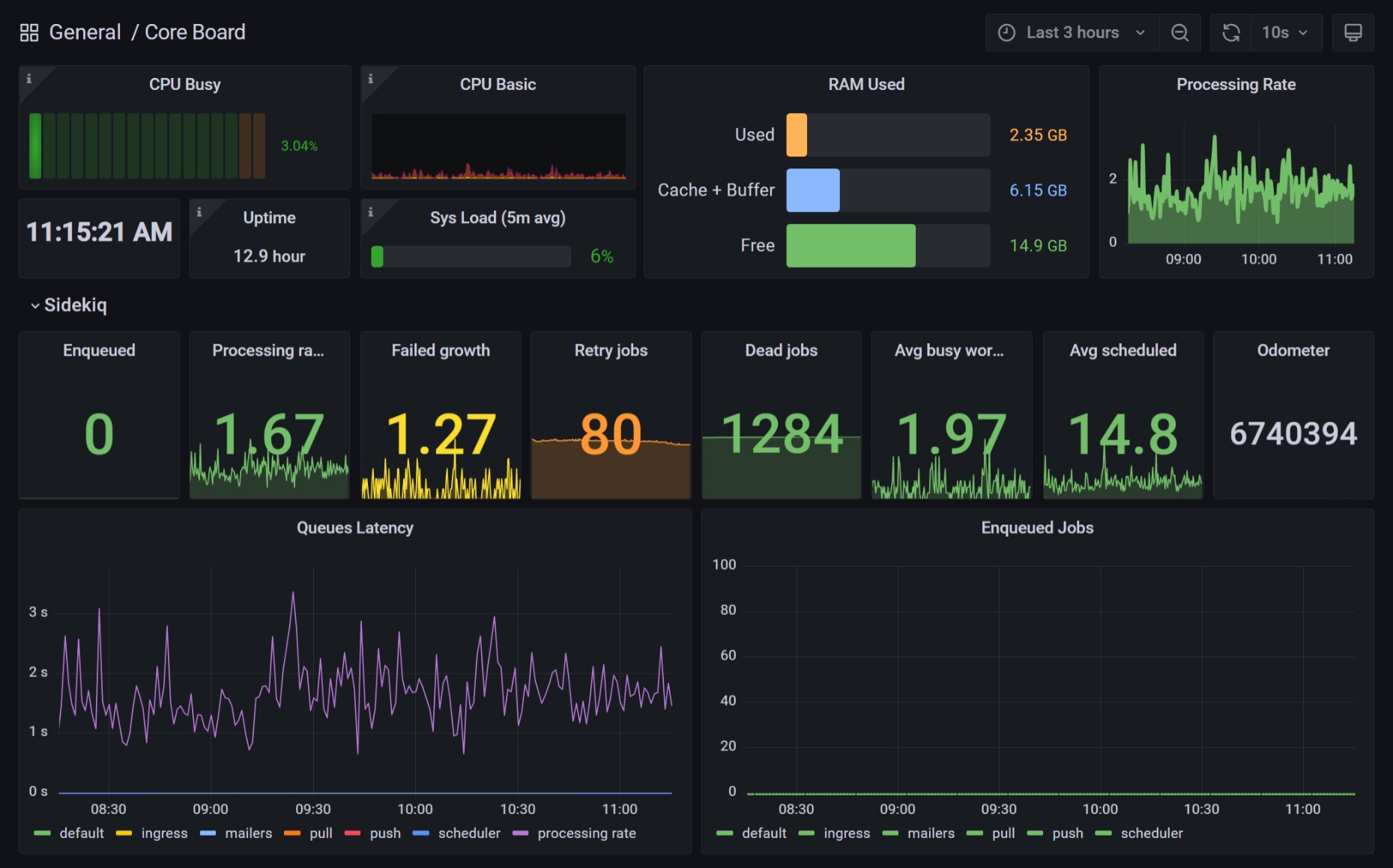

One catch is that not everything out there has an ARM build. This hasn't been a problem for any of the services mentioned above, but the Sidekiq metrics exporter that I use to monitor my Sidekiq queues with Grafana does not have an ARM Docker package. I ended up checking out the Git repository and building the Docker image on the Oracle server, and it's run without any issues.

Monitoring With Grafana

This is probably a luxury feature for a small instance, but you can set up a Grafana dashboard to monitor your system in real time, as I have.

The IPng Networks series on Mastodon monitoring is a must-read to getting monitoring working, along with tips on hosting a Mastodon instance in general. I also make use of the Sidekiq Prometheus exporter that I mentioned earlier for the all-important queue metrics.

Take a Look at Mastodon Forks

Most instances you'll encounter are running on vanilla Mastodon, and it's what most people are familiar with. Some aren't running on Mastodon at all - those are outside the scope of this post - and others are running modified forks of the original Mastodon source.

Running a fork is not risk-free. With fewer users & less resources than the mainline project, you may be more exposed to security issues, or even risk being left behind if the project is abandoned. The trade-off is getting features that the Mastodon developers either haven't implemented, or refuse to implement.

From what I have seen, the two most popular forks are glitch-soc and Hometown. I'm using glitch, as do some medium-sized instances like eldritch.cafe. It includes nice bonus features like support for longer posts, Markdown support, [5] and posts that are only visible on the same instance.

Defederate Early, Often and Proactively

There are a number of instances out there full of awful people, sharing horrible content with everyone they can - content that may even be illegal in your country. You don't want that anywhere near you if you can help it.

The good news is that Mastodon makes it easy to block other instances from communicating with your server, and it also supports "limiting" instances in cases where a full block isn't warranted. The bad news is that it's on you to put in those blocks.

The Fediverse community has informally settled on using the #Fediblock hashtag to inform other admins & users of bad actors that should be considered for defederation. Naturally, this can be a magnet for drama and interference by those same bad actors, but at time of writing it's the best way to spread the word about these troublemakers.

If you're running a one-user instance, you've got another problem: #Fediblock will be nearly empty! Even with relays, you'll probably only see a fraction of the posts carrying the hashtag. Furthermore, you'll be starting fresh, and people don't really talk about instances that have long since been blocked by the community - leaving you potentially unaware of them.

When starting out, I copied the blocklist from my previous server, and imported all 400 or so. [6] I didn't copy them blindly: especially for the larger instances, I reviewed whether they should be blocked or not, and made my own determination.

Next, I used my RSS reader to subscribe to mastodon.social's #Fediblock hashtag - every hashtag can be subscribed to via RSS, without an account - to get all the #Fediblock updates that the world's largest Mastodon instance is aware of.

I actually recommend using an RSS reader for this regardless of where your account lives. I only check the feed every week or so, and an RSS reader will let you mark items as read or unread, helping you keep track of what you've processed. Further, a good RSS reader will let you search the feed, so you can look up important context when necessary.

Don't Forget About Liability

There's an important topic that I'm not really qualified to talk about, nor do I hear much about it from other admins: liability. I'm not a lawyer or even mildly interested in this stuff, but we shouldn't ignore the topic.

Everyone thinking of running an instance (in the US, particularly) should read Denise's guide to potential liability pitfalls for people running a Mastodon instance. It's not legal advice, but it is a very comprehensive look at all the various legal trouble you could get into by hosting content on the web.

Backing Up & Migrating Postgres

My instance was originally on my home media server. I first attempted to move it to Oracle a month or so ago, but it failed when the database didn't restore correctly.

I didn't have any luck with the instructions on the Mastodon documentation site, but I did eventually figure it out. I practiced these actions outside of the migration before doing them for real, to make sure it would work.

The below directions assume you use a Docker container for Postgres, always named postgres, and your database name is mastodon:

-

Shut down Mastodon, so that database writes stop

- Don't shut down Postgres! You need that

-

Dump the Mastodon database to a gzip archive

docker exec postgres pg_dump -U postgres --clean --no-password mastodon | gzip > postgres-backup-mastodon.sql.tar.gz -

Dump the global objects (roles and tablespaces) of the Postgres server

docker exec postgres pg_dumpall -U postgres --clean --no-password --globals-only > postgres-backup-globals.sql -

Copy both files to the target server

-

Restore the globals to the new Postgres server

cat postgres-backup-mastodon.sql | docker exec -i postgres psql -U postgres mastodon -

Restore the Mastodon database to the new Postgres server

zcat postgres-backup-mastodon.sql.tar.gz | docker exec -i postgres psql -U postgres mastodon

Other Links I Want To Mention

I think that covers just about everything I want to talk about regarding hosting a Mastodon instance! There are other posts out there I want to share, however, that I didn't find a spot for above:

- Fedi.Tips - an unofficial guide to Mastodon & the Fediverse

- Aurynn Shaw's case study on Raspberry Pi's disastrous run-in with the Fediverse

- Posts about running a Mastodon instance from chaos.social's co-admins, Leah and Rixx

- Mastodon.world Blog - ...And then, November happened..

- Empty Coffee - Notes on Standing Up a Mastodon Server

Update - February 9, 2023

If you've read this far, then thank you! I hope your adventure in running Mastodon is as rewarding as mine has been so far.

Again, I'm just a hobbyist, and I'm neither a sysadmin nor a Mastodon developer. I may have some of these details wrong. If I do, feel free to reach out and let me know!

If you're looking for suggestions, Aurynn Shaw is very good at spotting trouble in the Fediverse. Kris Nóva and Esther are worth following as well. ↩︎

As a bonus: when a major event happens, and a surge of new users buries the servers, it's fun to watch their descent into madness. 🤣 ↩︎

I looked for the post I read that had more details about this, but I don't think it exists anymore. ↩︎

...ok, the domain name is the most expensive part. I've had that for a while, though. ↩︎

Be careful if you actually use that. Most of your followers are using regular Mastodon, which doesn't really support formatted HTML to that extent. Links work as expected, but everything else mainly renders as plain text. I'd avoid lists in particular. ↩︎

There may be automated import tools, but I never found them. I simply created 400

INSERTstatements and injected them directly to thedomain_blockstable in Postgres, like an animal. The management page was out of sync at first (Redis cache?), but it worked without a hitch. ↩︎